|

Multus is not a CNI in itself, but a meta CNI plugin, enabling the use of multiple CNI’s in a Kubernetes cluster. NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME Tip – Store the desired node-ip in a config file before launching the command on the nodes. With this set, we can extract the join command and run it on our servers:

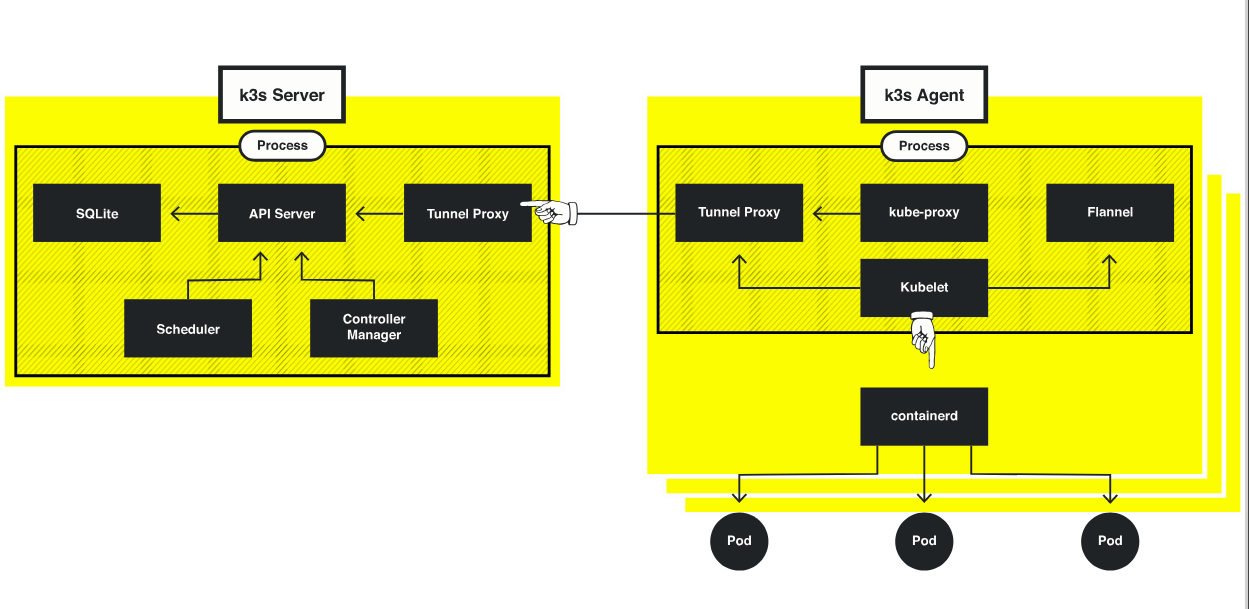

Which is why the specific interface used is defined in both sections. The Canal CNI is a combination of both Calico and Flannel. The section “Add-On Config” enables us to make changes to the various addons for our cluster: We have to have an existing CNI for cluster networking, which is Canal in this example Using Multus is as simple as selecting it from the dropdown list of CNI’s. VLAN60 – Provide access to ancillary services.įor the purposes of experimenting, I will create my VMs first.Īgent VM Config: Rancher Cluster Configuration.Longhorn is a cloud-native distributed block storage solution for Kubernetes. VLAN50 – Used exclusively by Longhorn for replication traffic.VLAN40 – Used for node node communication.Agent NodesĪgent nodes will be connected to multiple networks: This will reside on VLAN40 in my environment and will act as the overlay/management network for my cluster and will be used for node node communication. In RKE2 vernacular, we refer to nodes that assume etcd and/or control plane roles as servers, and worker nodes as agents. Although more common in bare metal environments, I’ll create a virtualised equivalent.

Because of this, I wanted to create a test environment to experiment with this kind of setup. If the problem vanishes as mysteriously as it arrived, know that it can return just as mysteriously.From my experience, some environments necessitate leveraging multiple NICs on Kubernetes worker nodes as well as the underlying Pods. Change one thing at a time and then test.“What changed?” Review those changes and see if rolling them back resolves the issue.Troubleshooting a failed system can be frustrating, but there are some things to remember. kubectl apply to pull information from the Rancher server and install the agentsģ.3 Performance Basic Troubleshooting 3.3.1 What Could Possibly Go Wrong?.Manage an existing cluster with Rancher.Linux control plane and one Linux worker (act as the ingress controller).Requirement is a supported version of Docker.Clusters deployed in an infrastructure provider utilize node templates and can also use RKE templates.Providing your authentication credentials needed.For example, if you choose the Amazon cloud provider, deployment of a LoadBalancer Service into the cluster will create an Amazon ELB. This makes changes to how the cluster operates. Infrastructure and Custom clusters allow you to set a cloud provider. See here the details on how to setup a cloud provider Cluster Owners have full control over everything in the clusterģ.2 Deploying a Kubernetes Cluster 3.2.1 RKE Configuration Optionsģ.2.3 Node Templates and Cloud Credentials.by testing that Pods on one host are able to communicate with Pods on other hosts.Test that nodes in each role are able to communicate with nodes in other roles.Make sure that you have enough CPU and RAM! 3.1.4 Networking and Port Requirements Deploy workloads with CPU and memory reservations and limits.When working with a non-RKE cluster, you will need to use the provider’s tools or other tools to provide business continuity for the clusters.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed